CitationBomb, the artwork by Zach Kaiser conceived for Art Journal Open, and ScholarStat, his new work featured in the Summer 2018 issue of Art Journal, form only two nodes of a complex matrix designed by the artist to engage us in critical awareness of and potential resistance to the data-driven quantification of our lives as scholars, artists, designers, thinkers, and teachers. Many of us who occupy these roles have long fought against the fetishization of data and concomitant information gathering—what numerical value applies to achieving the arc of a compelling paragraph? What algorithm measures the importance of the time spent sitting in front of a painting with a colleague, developing ideas and discussing the artist’s engagement with complex visual problems?

For me, the fundamental force of Kaiser’s art lies not in satire but in craft. I find myself placing his work in dialogue with the early twentieth-century pioneers in time management, Frank and Lillian Gilbreth. Using flashing lights attached to the hands and arms of craftspeople, the Gilbreths employed photography and film to map the gestures of these workers so that the optimal movement could be pulled apart and replicated in an attempt to make efficient processes for distribution to multiple unskilled workers. While disassembling craft, for me the Gilbreths’ own art was to visualize this destruction, this analysis. They therefore performed analytics.

Zach Kaiser’s two artworks, here in Art Journal Open and in the pages of Art Journal this summer, strike me as a cognate to the three-dimensional models the Gilbreths’ project produced and that Frank Gilbreth holds in a well-known photograph. These models, and Kaiser’s projects, make manifest the un-usefulness of analytics, something Kaiser’s work deploys to gum up the algorithms themselves. They also, perhaps unknowingly for the Gilbreths, reinsert art making; the very power of the work is that it is embedded in the carefully, precisely, attentively created form. Perhaps only by recognizing the art in the analytic can we understand its potentiality, its naturalization in our daily artistic and academic lives, and its profound destructive force.

—Rebecca M. Brown, Editor-in-Chief, Art Journal

In 2009 Joeran Beel and Bela Gipp conducted the first empirical study that attempted to reverse engineer Google Scholar’s ranking algorithm for academic writers. In their paper, they write: “Google Scholar seems to be more suitable for finding standard literature than gems or articles by authors advancing a new or different view from the mainstream.” They conclude, “Google Scholar also strengthens the Matthew Effect: articles with many citations will be more likely to be displayed in a top position, get more readers, and receive more citations, which then consolidate their lead over articles that are cited less often.”1 Scholarly metrics, such as those used by Google Scholar, incentivize clickbait scholarship, create reductive ideas about what avenues of research should be pursued within a given discipline, or conceal potentially transformative scholarship because of its unpopularity. New scholarly metrics platforms amplify these issues while rendering the scholarly act and the scholars themselves as fundamentally computable. It is incumbent upon us to subvert them, to put scholarly metrics systems into crisis such that a new equilibrium can be sought. CitationBomb is an artistic attempt at such an intervention.

In a climate of public austerity designed specifically to benefit private firms and strengthen the market’s stranglehold on everyday life, university administrators are told that they need to be strategic about how they steer their institutions. “Strategic,” here, of course, is code for “market-oriented.” Predictive analytics—designed by the likes of Academic Analytics, Simitive, and the new ScholarStat—present themselves as the perfect tools to mitigate risk (and individualize it by offloading it onto faculty members themselves) in this environment through evaluative metrics that quantify, categorize, and rank faculty activity. The near-real-time feedback offered by such systems purports to help universities point themselves in the “right” direction, encouraging them to invest in faculty members who have the highest “impact.” Steering is the perfect metaphor here, because the Greek word “to steer” is the etymological root of “cybernetics.”

The origins of cybernetics are found in the ideas of prediction and control crystallized during World War II: using flows of information in order to understand and manipulate behavior. In cybernetics, systems can be understood as information processors, and any change in the state of a system can be seen as the result of flows of information. Generally speaking, cybernetics holds that in order for syst ems to function “perfectly” (perfection, narrowly defined here as some sort of ultimate efficiency), the right information must reach the right part of the system at the right time, regardless of whether those parts are people or anti-aircraft guns, frogs or nation-states. The trick, though, is to find a common “language” through which such a frictionless exchange might operate, and to make certain assumptions in order for this translation to be operationalized. During the Cold War, this language was mathematics, and the key assumption that enabled human behavior and mathematical models to become equivalent was that humans always behaved in their own self-interest.

Cybernetics, sometimes paradoxically, carries the neoliberal ideology in its march towards a society of ultimate efficiency and ultimate individual responsibility, where the human-computational-system makes rational decisions with perfect information and participates willingly in the marketplace. Around the same time as the advent of cybernetics, Friedrich Hayek, the forefather of what we today call neoliberalism, wrote: “It is more than a metaphor to describe the price system as a kind of machinery for registering change, or a system of telecommunications which enables individual producers to watch merely the movement of a few pointers, as an engineer might the hands of a few dials.”2 Hayek believed in the market as the ultimate information processor: the freer the flow of capital and information, the more perfectly the system would operate.

That compulsion towards ultimate efficiency and individual responsibility, hallmarks of Hayek’s neoliberal ideology, requires that educational “assets” be judged and translated into the language of the market.3 This requirement is predicated, of course, on the notion that a machinic efficiency which approaches instantaneity is some sort of admirable human goal. Further, such a requirement offloads risk onto the individual who then seeks “biographical” solutions—higher citation counts or more steps—to systemic problems like education, job security, or wellness.4 Through their absurd attempts to translate data about individual educational “assets” into the language of the market, university administrators must decide based on the information available to them whether or not faculty members should continue to receive investment. The more sophisticated the metrics become—not just about citations or number of publications, but about everything related to faculty output—the more administrators will rely on metrics to shape decision-making processes.5 As long as tech companies continue to build on the legacy of cybernetics in order to demonstrate the fundamentally computational nature of human existence, the influence of metrics on academia will be a positive feedback loop, with new metrics being developed, new decisions being based on those metrics, and new metrics being developed in response to the consequences of those decisions.

When articles are added to Google Scholar, the software combs the citations of the articles and adds those to the profiles of the authors who wrote the articles cited, altering the “scores” (such as the h-index and the i10-index) for those authors. In the case of scanned PDFs that are uploaded, the actual text of the citation might be interpreted through Optical Character Recognition, meaning only that text which is visible is interpreted as text. In the case of a Word document or PDF that is already “text” and not “image” (as a scan of a page of a journal would be), the “text” on the page does not necessarily need to be human-readable. Google Scholar combs through all the text on the page (visible to the human eye or not).

The ranking algorithms of Google Scholar and other academic databases are based at least in some degree on who has cited your paper in their papers. The h-index and other metrics derived from these statistics have become powerful measures of scholarly “impact.” Not only can this focus on metrics for impact drive self-citation or the development of citation alliances—effectively search engine optimization (SEO) for scholarship—but it can drive the direction of research more broadly. At worst, papers are reduced to clickbait and at best, research topics or publications that typically yield the highest metrics will drive the focus of the research within a given field as opposed to scholars pursuing their interests or what they themselves believe to be best for the field despite divergence from the orthodoxy of their discipline. An increasing reliance on scholarly metrics also threatens to create a new type of quantified professoriate, oriented toward an “objective reality” that relies on an assumption of the self as computable and therefore only truly understandable via computation.

How might we intervene in order to devalue scholarly metrics? Might we be able to overflow the system such that it can no longer parse “impact” because everyone’s citation counts “explode”? What happens to the function and use of Google Scholar when everyone is citing everyone else? By creating a system that extends a paper’s citations to the papers and books that those papers and books have cited, and then again to related papers and books, a systematic overflowing of citation counts can be created such that Google’s ability to measure impact via citation counts plummets, forcing us, one hopes, to reckon with our reliance on such metrics in the first place to determine the value of scholarship.

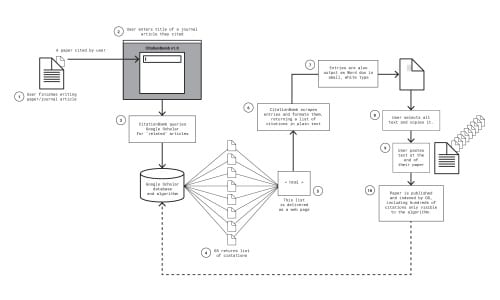

I propose the CitationBomb as such an intervention. The CitationBomb works as follows:

- The user opens the application and enters the title of a paper cited within a paper in process.

- CitationBomb finds related papers and books (per Google Scholar)

- CitationBomb outputs a Word document containing these citations in small, white text

- The user copies and pasts from the CitationBomb

Word doc into the end of the paper in process, such that they appear invisible in a Word document. - When the user’s paper is published (assuming the invisible text remains), Google will eventually index all aspects of this paper in Google Scholar. (A user might be inclined to post a version with the white text on her own website or Academia.edu to ensure the version with the additional citations is indexed.) Once indexed, I think that the following will happen:

- It will include all the citations (including those in white, because it is reading all text on the page, not just what is visible).

- The more users do this, the more Google Scholar will become overflowed with citations.

- This will make it difficult for the algorithms to make sense of influence or impact.

Despite its tactical origin, the CitationBomb is more a symbolic intervention for a number of reasons: first, for a noticeable shift to occur in citation counts, the number of people using it would need to be enormous. Second, because I do not have access to the proprietary algorithms that Google Scholar uses to index papers and put them into its database (these algorithms are protected intellectual property), I cannot know the extent to which the project can be successful in actually overflowing the commodity market for citations. Google Scholar is a black box on which many people’s professional lives depend. Even a widespread use of the CitationBomb might not do anything. Or, in a flood of citations, would Google use a neural net trained to prioritize articles that it determines to be “original,” worsening the situation? Either way, we will never know for sure if our tactics are successful. It’s my hope that in using it and thinking about what it stands for, we can come to an understanding of the changes that are taking place in academia—and in society as a whole—right under our feet. The ground on which “reality” is established is shifting. We turn to analytics, numbers, and therefore computation because it feels like there’s nothing else firm to stand on.

Today, this reliance on computation in order to orient us has led to a platformization of life. Tools and technologies are built on top of other tools and technologies. The infrastructure of “reality” is both physical and digital, and controlled by fewer and fewer corporations with increased capital accumulation. How can any mode of resistance operate successfully when the platforms that facilitate the resistance are also complicit in the propagation of the system being resisted? Any form of subversion that needs to use systems like a Google Cloud API (Cloud Vision, for example) or that relies on the particular structure of HTML tags on a given site is vulnerable to any change made by those platforms. This is one of the core problems with attempts to subvert the cybernetic quest for ultimate efficiency and the subjection of all life to systems of predictive analytics: that any tool built to subvert those systems will almost always rely on some of those very systems. In order for CitationBomb to continue working, it needs Google not to change certain things about the way Google Scholar works.

A comrade and I once gave a presentation on dealing with systems of algorithmic inference and recommendation, and we built a rather crude taxonomy of modes of subverting such systems: overflow (giving it too much data), expose, scramble (giving it the “wrong” data), disappear, or outright break (“hacking” à la Mr. Robot). In the case of academia, “opting out” is not an option for many of us whose livelihoods are predicated on our participation in it. Indeed, whether you are considering leaving Facebook or FitBit, employers, insurance providers, and other organizations to which you are tied often make it very difficult to opt out, with the threats of social decoupling or financial risk looming large. Such risks will only increase as organizations continue to adopt new analytic platforms in striving for efficiency and productivity.

In the end, CitationBomb probably won’t do much to “break” Google Scholar or change the way scholarship is valued in research academia. It will not end the neoliberal turn of the academic establishment nor will it put an end to “impact” assessments that rely on faulty analytics systems. I want to talk about what it means to learn, to produce knowledge, and to appreciate the work of those engaged in that production. I want to talk about how we understand ourselves as teachers and students of the world, in the world. A world that is not objective, but not fictitious either. I want to talk about a world in which the production of knowledge becomes “an instrument of adoration” for the unknown, for that which escapes language and cannot be found in the totalizing cybernetic dream of prediction and control.6 I don’t know what that world looks like, but I would like you to join me in finding out.

Download the CitationBomb here.

A special thanks to comrade Gabi Schaffzin for his help with this project.

Zach Kaiser is Assistant Professor of Graphic Design and Experience Architecture at Michigan State University. His research examines the role of design in the legitimation of a computable subjectivity as an aspirational ideal. Zach regularly exhibits and lectures nationally and internationally. He believes in the value of the sub-optimal.

- Joeran Beel and Bela Gipp, “Google Scholar’s Ranking Algorithm: An Introductory Overview,” Proceedings of the 12th International Conference on Scientometrics and Informetrics 1 (Rio de Janeiro, Brazil: International Society for Scientometrics and Informetrics, 2009): 230–41. The Matthew Effect is often referred to as “The Matthew Effect of Accumulated Advantage.” Some choose to summarize it with the old adage “the rich get richer, the poor get poorer.” ↩

- Friedrich Hayek, “The Use of Knowledge in Society,” American Economic Review 35, no. 4 (1945): 519–30. ↩

- The history of education in the United States, writes David Labaree, can be divided into three competing ideologies that have dominated at different times: equality, social efficiency, and social mobility. The democratic equality ideology views the goal of education as preparing students to be participatory citizens in a democracy. The social efficiency ideology sees the goal of education as training workers for producing a better society for all. And the third, the social mobility ideology, sees education as a marketplace with all students as individuals in competition with one another for social and financial position, in which a specific form of education (the highly valued credential) becomes a commodity. Labaree argues that the third of these ideologies has come to dominate American education today. David Labaree, “Public Goods, Private Goods: The American Struggle over Educational Goals,” American Educational Research Journal 34, no. 1 (1997): 39–81. ↩

- For example, fitness data via partnerships with FitBit and smart furniture manufacturers to determine whether more fit faculty produce more “impact,” other biometric and psychometric indications to help faculty identify causes of stress that decrease productivity, weighting of metrics based on location, discipline, and type of institution. ↩

- Zygmunt Bauman, Liquid Modernity (London: Polity, 2000). ↩

- See Vilém Flusser, On Doubt (Minneapolis: Univocal, 2014). ↩